List of files with a search string within the first 15 lines

As part of the “Ask Robert” campaign, I recently got the following question:

“Assuming I have a huge directory of files of different types. How can I - with the help of the shell and awk - get a list of all *.tex and *.txt files within this directory, that contain a certain string within the first N lines?”

(For the sake of readability I slightly edited the wording. Ulrike - I hope you’re ok with this.)

This is a very great task. First, to demonstrate the power of the Linux command-line and second, to give you an understanding of how to tackle these types of problems.

And as always - there are way more ways to get this problem solved.

So take this as an inspiration for similar tasks.

Ok - so let’s start.

This is what we have

- many, many files within a directory

- some of them are *.txt and *.tex files

And that’s what we want:

- The name of every *.txt or *.tex file, that contains a search-phrase within the first N lines

Let’s start with the part that searches the files for a search phrase

Do the search with grep … the obvious one

The tool that first comes into mind if talking about searching for a phrase within a file, is the command grep.

If you, for example, want to search for the phrase “HELLO” in a file called document.txt, you could do the following:

grep HELLO document.txt

This would give you all the lines from document.txt containing the phrase.

But grep will always search the whole file. If you need to search only within a certain set of lines, we have to use a different approach.

Ask head for help

One approach could be, to combine grep with head, to search for instance only the first 15 lines of document.txt:

head -n 15 document.txt | grep HELLO

Now we are searching only within the first 15 lines - but the next step would be to output only the filename if there is a match.

And because I want to create a cool looking one-liner here and not a full blown shell-script, let’s tackle the problem in a different way.

Let’s have a look at the awk-tool Ulrike mentioned in her question …

A different approach: use awk for doing the search

awk is a Linux command-line tool that is part of nearly every Linux installation.

The main purpose of this tool is to process structured text-based data. And therefore it has everything on board we need here …

Let’s start with using awk to search the whole file “document.txt” for lines containing HELLO and print them out - just like grep would do:

awk '/HELLO/{print}' document.txt

Yes - this looks a bit awkward (sorry for that lame pun😉 ), but it’s really easy to understand, once you know a few basics only:

The part '/HELLO/{print}' is the “thing” awk shall do for every single line of the given file.

Within this “Thing” - the part between the curly brackets ({...}) is the action awk shall execute.

And the part before the curly brackets says, when - in the context of which line - you wanna see the action executed. Without this first part, awk would do the action with every single line it sees.

<CONDITION> { <AKTION> }

Let awk print something out with print …

The command print used in the command-line example above is used to … uhm … print something out. And without a further parameter given, print just prints out the whole line currently processed at once.

Let awk only print out lines matching a search pattern …

As said - the part in front of the curly brackets describes the condition, if the action shall be executed for a line or not.

In the example above, the condition for executing the action is a string between slashes (/…/).

That means, this string describes a regular expression as the condition:

If the line is matched by the regular expression, then - and only then - awk shall run the action for this line.

So simply spoken

awk '/HELLO/{print}' document.txt"

gives you exactly the same output as

grep HELLO document.txt

would give you.

But now let’s add a little awk specific magic: The condition for running an action with awk can contain a lot more than just a regular expression.

Print out matching lines only, if we are within the first 15 lines of the file

Or in other words: Do the search only for the first 15 lines of the file.

For this we can take the awk built-in variable FNR. This variable contains the number of the currently processed record, which is, in our example here, the number of the currently processed line.

With this variable - in combination with a logical AND operation - we simply do the search only, if we are within the first 15 lines of the file:

awk 'FNR <= 15 && /HELLO/{print}' document.txt

cool - he?

Ok - but for now we have only duplicated the behavior of

tail -n 15 document.txt | grep HELLO

What’s the advantage of using awk here (apart from the really nerdy looking command line ;-))

Well - let’s go the next step: We are not interested in all the matching lines. Instead we are interested only in the filename if there is a match.

Use awk to print out just the filename

And awk can do this for us: Instead of printing out the matching line, we use a second built-in variable with awk: The variable FILENAME.

To print out just the name of the file if our condition is true, use print FILENAME instead of the plain print command:

awk 'FNR <= 15 && /HELLO/{print FILENAME}' document.txt

we are getting closer and closer to a solution …

A little problem to solve at this step is: If the file contains multiple lines matching our condition, then awk would print out the name of the file multiple times.

Remove duplicate output lines with uniq

One way to solve this, is to simply “throw away” duplicate lines from the output of awk.

For this, we could “pipe” the output of awk straight to the tool uniq without any further parameters:

awk 'FNR <= 15 && /HELLO/{print FILENAME}' document.txt | uniq

Did I say that we’re getting closer and closer to a solution?

Great. But now let’s tackle the remaining part of the task. We do not want to process one single file only - instead we wanna process all the *.tex and *.txt files within a directory.

Process multiple files at once

And I bet you have an idea for a solution too:

Instead of giving just one single filename to awk, we can give it a list of files by simply providing search patterns.

awk 'FNR <= 15 && /HELLO/{print FILENAME}' *.tex *.txt | uniq

This works as intended and this could be the final solution for the question at the beginning.

But why “could”?

Did you read in the question that we have a “huge directory” of files?

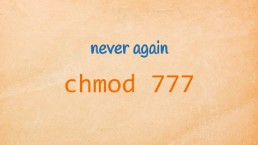

Avoid ‘Argument list too long” errors …

If we assume a directory with hundreds of thousands or even millions of files, then we could have a problem with the last approach:

In this scenario (a huge directory) the pattern “*.tex *.txt” could lead to a really huge list of filenames and therefore this then leads to an Argument list too long error if you are submitting the command line.

How to solve this?

Well - we could simply use the command “find” to search for the files we are interested in, and then analyze the content of every single file one-by-one.

Use find to process files one-by-one and to add recursion

A nice side-effect using find would be that we can now search entire directory trees recursively for the files we are interested in.

Do you know find?

If not: To list all regular files in a given directory “myfiles” matching the pattern “.tex” or “.txt”, we would use the find command like this:

find myfiles -type f \( -name "*.tex" -o -name "*.txt" \)

This looks a little bit wired with the quoted brackets, but we need them for our example:

We are looking for regular files (“-type f”) that have also names matching “.tex” or *.txt (-name “.tex” -o -name “*.txt”).

So our logic reads like this

<regular-file> AND ( <name matches *.tex> OR <name matches *.txt> )

And at the command-line we need to quote the brackets to avoid a misunderstanding by the shell.

Execute awk for every single file we found …

And now that we have a list of all the files we are interested in, let’s do our awk-magic with every single one of them by using the -exec parameter for find:

find myfiles -type f \( -name "*.tex" -o -name "*.txt" \) \

-exec awk 'FNR <= 15 && /HELLO/{print FILENAME}' {} +

Everything you give to find between the -exec statement and a closing “+” sign, will be executed by find for every single finding. The name of the file (including the needed path) for which the given command is executed, can be referenced by the pair of curly brackets “{}”.

side note: For readability I broke the command-line here into multiple lines. You can execute it exactly in the way shown here or just write everything on one single line. But then you have to remove the trailing backslash (\) at the end of the first line.

The final solution with find, awk and uniq

And do not forget the uniq-command to remove the unwanted duplicate filenames:

find myfiles -type f \( -name "*.tex" -o -name "*.txt" \) \

-exec awk 'FNR <= 15 && /HELLO/{print FILENAME}' {} + | uniq

Take a step back! Isn’t this a cool looking command line? ;-)

Mission accomplished!

Here is what to do next

If you followed me through this article, you certainly have realized that knowing some internals about how things are working at the Linux command line, can save you a lot of time and frustration.

And sometimes it’s just fun to leverage these powerful mechanics.

If you wanna know more about such “internal mechanisms” of the Linux command line - written especially for Linux beginners

have a look at “The Linux Confidence Framework”

In this framework I guide you through 5 simple steps to feel comfortable at the Linux command line.

This framework comes as a free pdf and you can get it here.

Wanna take an unfair advantage?

If it comes to working on the Linux command line - at the end of the day it is always about knowing the right tool for the right task.

And it is about knowing the tools that are most certainly available on the Linux system you are currently on.

To give you all the tools for your day-to-day work at the Linux command line, I have created “The ShellToolbox”.

This book gives you everything

- from the very basic commands, through

- everything you need for working with files and filesystems,

- managing processes,

- managing users and permissions, through

- software management,

- hardware analyses and

- simple shell-scripting to the tools you need for

- doing simple “networking stuff”.

Everything in one single, easy to read book. With explanations and example calls for illustration.

If you are interested, go to shelltoolbox.com and have a look (as long as it is available).